How to Detect Faces on iOS Using Vision and Machine Learning

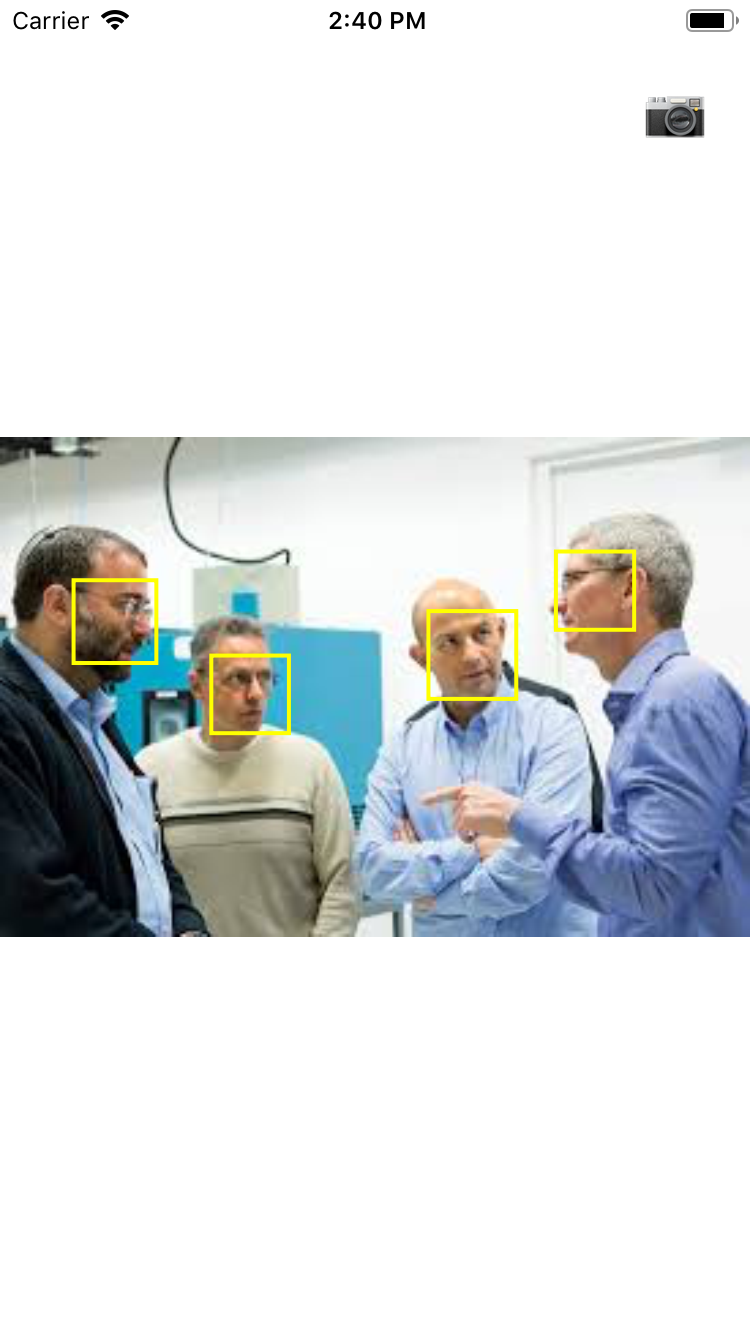

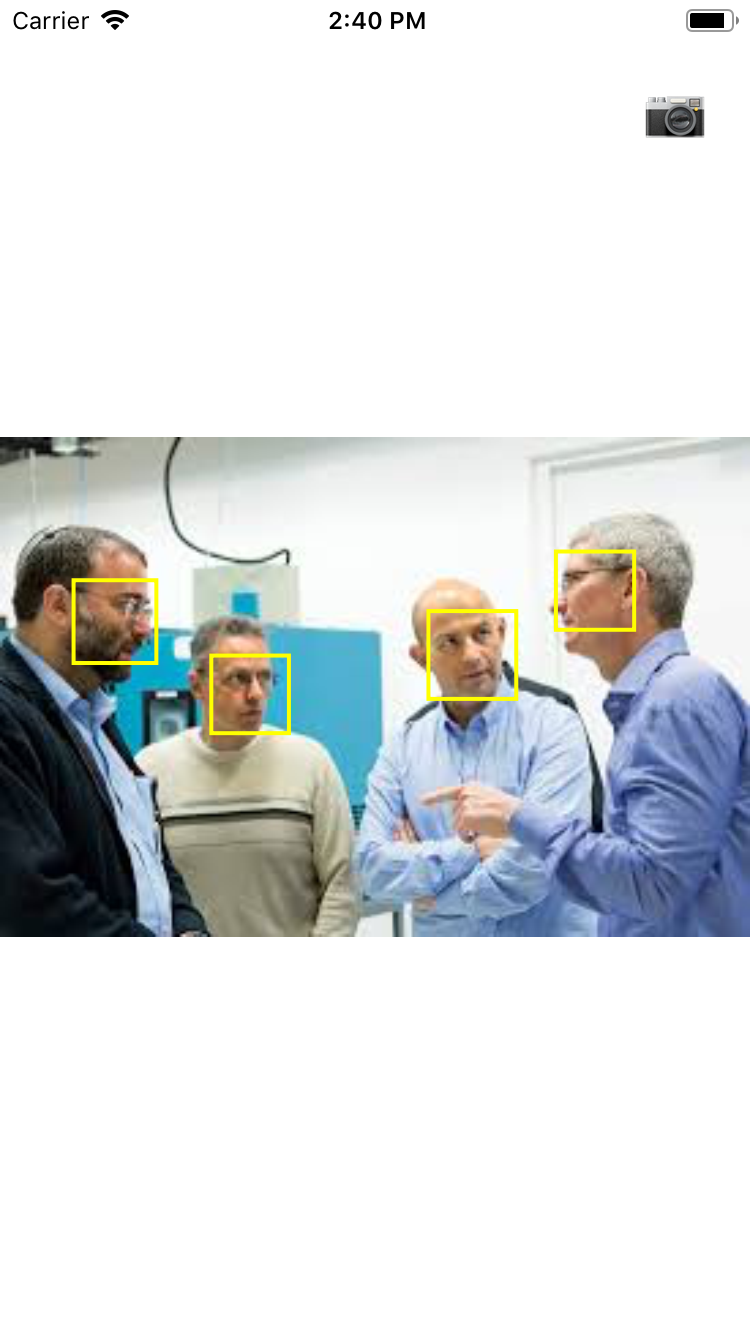

Apple's Vision framework offers a lot of tools for image processing and image-based machine learning, one of those is detecting faces. This tutorial will show you how to detect faces using Vision, and display those faces on the screen. By the end of the tutorial you'll have an app that looks like this:

You can use facial detection to crop images, focus on important parts of an image, or for letting the users easily tag their friends in an image. You can find the full code of this tutorial on GitHub.

Setting up the UI

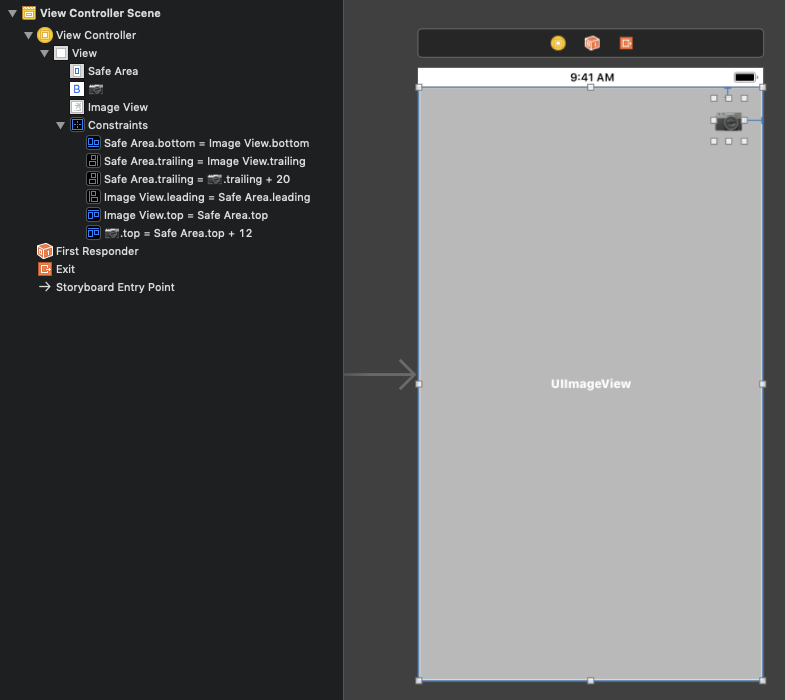

We'll start by making sure we can see and pick new photos to detect faces on. Create a new single-view app project in Xcode, and open up Main.storyboard.

Start by dragging an image view from the object library onto the storyboard. Once it's there, add four new constraints to it so that it's fixed to the leading, trailing, top and bottom edges of the safe area. This image view will show the image the faces are being detected on.

Next, drag a button to the storyboard, and add two new constraints:

- make the button's trailing space equal to the safe area trailing space with a constant of 20

- make the button's top space equal to the safe area top space with a constant of 20

This should fix the button to the top-right edge of the screen. You'll use this button to show an image picker, so give it a title like "Pick image". I used a camera emoji. By the end of this, your storyboard should look like this:

Next, open ViewController.swift and add an IBOutlet to the storyboard's image view called imageView.

@IBOutlet weak var imageView: UIImageView!

Next, add a new IBAction from the button in the storyboard, and call it cameraButtonTapped.

@IBAction func cameraButtonTapped(_ sender: UIButton) {

}

Next, add the following method to the class:

func showImagePicker(sourceType: UIImagePickerController.SourceType) {

let picker = UIImagePickerController()

picker.sourceType = sourceType

picker.delegate = self

present(picker, animated: true)

}

This method will show a new image picker as a modal screen, configured with the source type that was passed to the method. At this point you'll get an error because you didn't implement UIImagePickerControllerDelegate yet, so let's do this next. Add the following extension to the bottom of the file:

extension ViewController: UIImagePickerControllerDelegate,

UINavigationControllerDelegate {

func imagePickerController(

_ picker: UIImagePickerController,

didFinishPickingMediaWithInfo info:

[UIImagePickerController.InfoKey : Any]) {

guard let image = info[.originalImage] as? UIImage else {

return

}

imageView.image = image

dismiss(animated: true)

}

}

This will fetch the picked image and show it on the view controller's image view. It will then dismiss the modal image picker.

We now have the code for the image picker in place, but we're not actually calling the methods anywhere. We'll do this from cameraButtonTapped, when the button gets tapped.

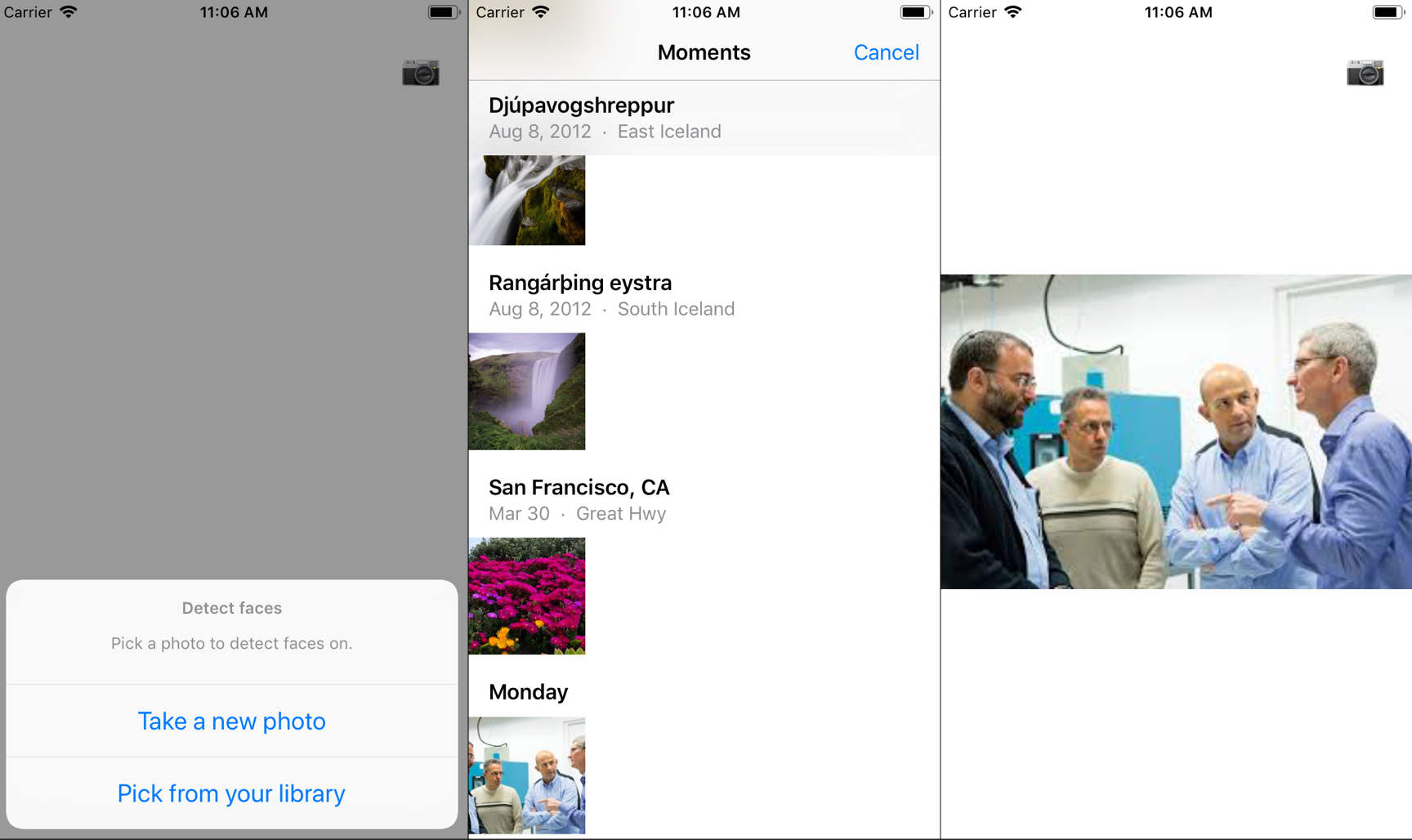

First we'll present an action sheet to let the user pick whether they want to take a new photo or pick an existing one from their library. Add the following code to cameraButtonTapped:

let actionSheet = UIAlertController(

title: "Detect faces",

message: "Pick a photo to detect faces on.",

preferredStyle: .actionSheet)

let cameraAction = UIAlertAction(

title: "Take a new photo",

style: .default,

handler: { [weak self] _ in

self?.showImagePicker(sourceType: .camera)

})

let libraryAction = UIAlertAction(

title: "Pick from your library",

style: .default,

handler: { [weak self] _ in

self?.showImagePicker(sourceType: .savedPhotosAlbum)

})

actionSheet.addAction(cameraAction)

actionSheet.addAction(libraryAction)

present(actionSheet, animated: true)

The user can pick from two options: either selecting an image from their library or taking a new image. Both of these options will call showImagePicker, but with a different parameter for the source type.

We'll add one more tweak: add the following line to the bottom of viewDidLoad:

imageView.contentMode = .scaleAspectFit

This will make sure the image isn't stretched when it's displayed on the image view.

If you run the project now, you should be able to pick an image from your library and see it appear on the view controller.

Detecting Faces

Now that we have our image, we can detect faces on it! To do this we'll use Vision: a built-in framework for image processing and using image-based machine learning.

Vision provides out-of-the-box APIs for detecting faces and facial features, rectangles, text and bar codes (including QR codes). Additionally, you can combine it with custom Core ML models for building things like custom object classifiers. For more information on the different machine learning frameworks in iOS, check out the introduction to machine learning on iOS.

Before we can start using Vision, we need to import it, so add the following import to the top of the file:

import Vision

We'll start by adding a new method to the class called detectFaces:

func detectFaces(on image: UIImage) {

let handler = VNImageRequestHandler(

cgImage: image.cgImage!,

options: [:])

}

The core of vision is the request handler. This handler performs different operations on a single image, which is why we are creating it with the image view's image. The different actions are called "requests", and a handler can perform multiple requests at once.

Since we need to detect faces, we can use the built-in VNDetectFaceRectanglesRequest, which lets us find the bounding boxes of faces on our image.

Add the following property to the class:

lazy var faceDetectionRequest =

VNDetectFaceRectanglesRequest(completionHandler: self.onFacesDetected)

A single request can be performed on multiple images, which is why it's good practice to create one shared request object instead of creating it every time a function is called.

Note that there is also a VNDetectFaceLandmarksRequest. Alongside face bounding boxes, it detects facial features like noses, eyes, mouths and similar. Because of that, it's slower than VNDetectFaceRectanglesRequest, so we won't be using it.

Next, we'll implement the completion handler by adding the following method to the class:

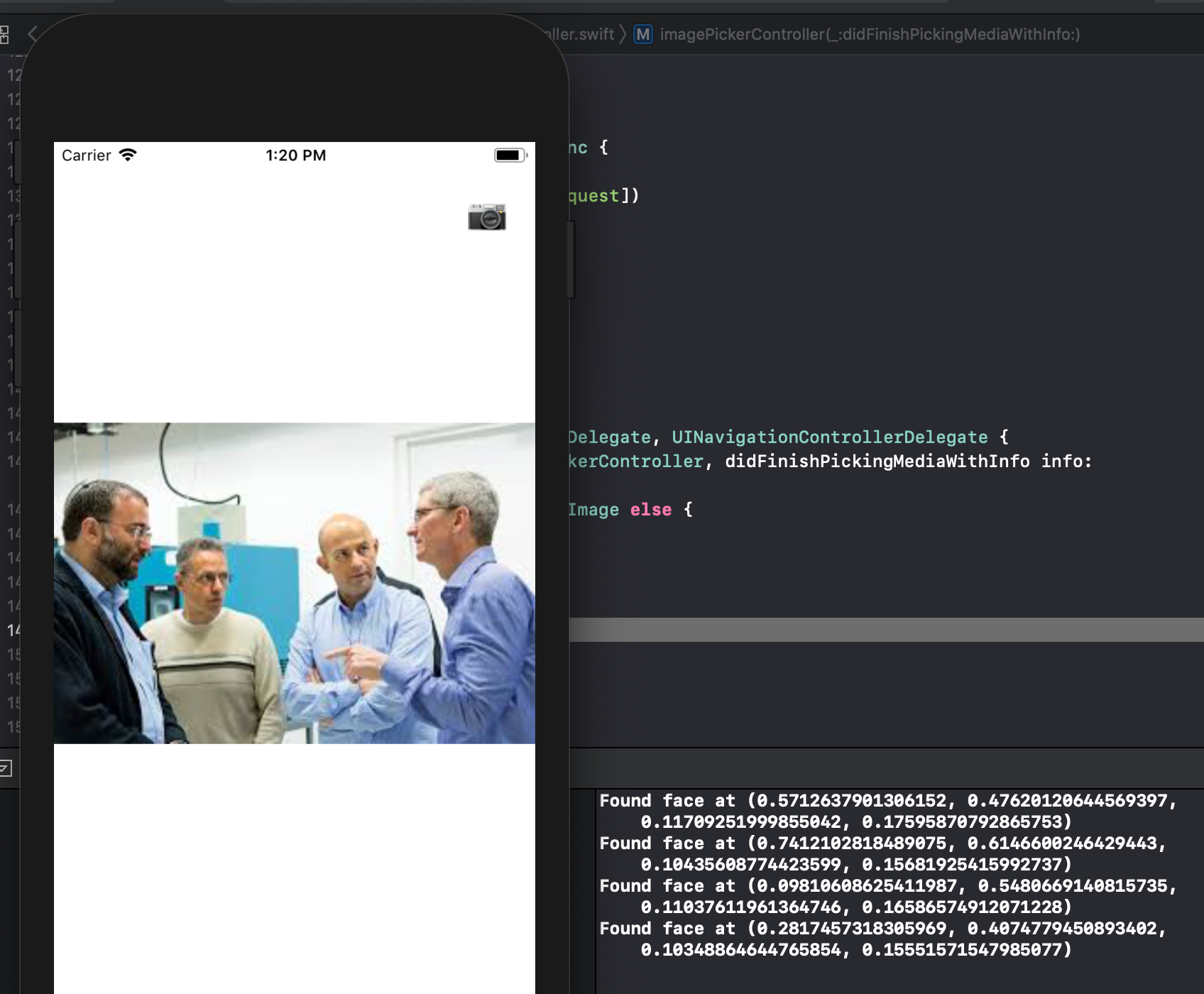

func onFacesDetected(request: VNRequest, error: Error?) {

guard let results = request.results as? [VNFaceObservation] else {

return

}

for result in results {

print("Found face at \(result.boundingBox)")

}

}

For now we'll just print out the results to the console. The boundingBox property contains a normalized rectangle of where the face is. This means that its origin and scale are percentages of the image's width and height, and not absolute values. We'll see how to deal with that later on in the tutorial.

Next, we need to actually perform the request. We'll do this by adding the following code to the bottom of detectFaces:

DispatchQueue.global(qos: .userInitiated).async {

do {

try handler.perform([self.faceDetectionRequest])

} catch {

print(error)

}

}

We'll hop over to a background queue so we don't block the main thread while detection is going on. There, we'll try to use the handler to perform the request. If it fails, we'll simply print the error out.

Finally, we need to call the detectFaces method from imagePickerController, so add the following line to the extension, right above the call to dismiss:

detectFaces(on: image)

If you run the project now and pick an image with a face on it, you should see the faces' bounding boxes printed to the console.

Drawing Boxes

Now that we have the bounding boxes, it's time to draw them on the screen! We'll use Core Animation to create a CALayer for each face's bounding rectangle, and add them to the screen.

Start by adding the following property to the class:

let resultsLayer = CALayer()

This layer will hold all of the faces' rectangles. We'll add this layer to the image view by adding the following code to the bottom of viewDidLoad:

imageView.layer.addSublayer(resultsLayer)

As mentioned before, Vision returns normalized rectangles in the image. This means we need a way to convert from those normalized values into actual coordinates in the image view. To do this, we first need to know where the image actually is within the view (because it gets scaled to fit inside the view). We can do by adding this handy extension by Paul Hudson to the top of the file:

extension UIImageView {

var contentClippingRect: CGRect {

guard let image = image else { return bounds }

guard contentMode == .scaleAspectFit else { return bounds }

guard image.size.width > 0 && image.size.height > 0 else { return bounds }

let scale: CGFloat

if image.size.width > image.size.height {

scale = bounds.width / image.size.width

} else {

scale = bounds.height / image.size.height

}

let size = CGSize(

width: image.size.width * scale,

height: image.size.height * scale)

let x = (bounds.width - size.width) / 2.0

let y = (bounds.height - size.height) / 2.0

return CGRect(x: x, y: y, width: size.width, height: size.height)

}

}

Now that we have the image's bounds, we can use it to de-normalize our face bounding boxes. Add the following method to ViewController:

func denormalized(

_ normalizedRect: CGRect,

in imageView: UIImageView)-> CGRect {

let imageSize = imageView.contentClippingRect.size

let imageOrigin = imageView.contentClippingRect.origin

let newOrigin = CGPoint(

x: normalizedRect.minX * imageSize.width + imageOrigin.x,

y: (1 - normalizedRect.maxY) * imageSize.height + imageOrigin.y)

let newSize = CGSize(

width: normalizedRect.width * imageSize.width,

height: normalizedRect.height * imageSize.height)

return CGRect(origin: newOrigin, size: newSize)

}

This is a lot of math, but don't panic!

Since the values in the normalized CGRect are percentages, we can get the actual values by multiplying them with the images width or height, respectively. We'll also add the images origin to the new rect's origin coordinates so it starts from the same point the image starts. The normalized rectangle has its origin in the lower-left corner, while Core Animation expects drawing to start from the top-left, which is why we're subtracting the origin's y value from 1. Whew!

Now that we have all the pieces in place, it's time to put them together and actually draw these rectangles on the screen. Add the following code to the bottom of onFacesDetected:

DispatchQueue.main.async {

CATransaction.begin()

for result in results {

print(result.boundingBox)

self.drawFace(in: result.boundingBox)

}

CATransaction.commit()

}

Since the callback can be called on an arbitrary thread, we need to move back to the main thread in order to update the UI. We'll perform all updates inside a CATransaction so that they happen in a single UI pass. For each bounding box, we'll draw a face. The final key to this puzzle is adding the drawFace method to the class:

private func drawFace(in rect: CGRect) {

let layer = CAShapeLayer()

let rect = self.denormalized(rect, in: self.imageView)

layer.path = UIBezierPath(rect: rect).cgPath

layer.strokeColor = UIColor.yellow.cgColor

layer.fillColor = nil

layer.lineWidth = 2

self.resultsLayer.addSublayer(layer)

}

This method creates a new layer and draws the denormalized rectangle with a yellow outline. It then adds this layer to the results layer.

If you run the project now, you should see a yellow rectangle around people's faces!

And that's it! You can find the full code of this tutorial on GitHub.

Other Cool Machine Learning Tools

This Vision tutorial only scratched the surface of machine learning on iOS. You can easily adapt this code to use Vision to detect facial features like eyes or noses. You can also combine Core ML with Vision to use custom object detection models on images. Or better yet, train your own model with Create ML to train your own models. There are also other frameworks like Natural Language. You can learn more about the iOS machine learning landscape in our article on iOS machine learning!